Difference between revisions of "Directory:Jon Awbrey/Papers/Differential Analytic Turing Automata"

Jon Awbrey (talk | contribs) (update) |

Jon Awbrey (talk | contribs) (update) |

||

| Line 1,622: | Line 1,622: | ||

===Computation Summary for Logical Equality=== | ===Computation Summary for Logical Equality=== | ||

| − | Figure 2.1 shows the expansion of <math>g = \texttt{((u, v))}</math> over <math>[u, v]\!</math> to produce the expression: | + | Figure 2.1 shows the expansion of <math>g = \texttt{((} u \texttt{,~} v \texttt{))}\!</math> over <math>[u, v]\!</math> to produce the expression: |

{| align="center" cellpadding="8" width="90%" | {| align="center" cellpadding="8" width="90%" | ||

| − | | <math>\ | + | | |

| + | <math>\begin{matrix} | ||

| + | uv & + & \texttt{(} u \texttt{)(} v \texttt{)} | ||

| + | \end{matrix}</math> | ||

|} | |} | ||

| − | Figure 2.2 shows the expansion of <math>\mathrm{E}g = \texttt{((u + | + | Figure 2.2 shows the expansion of <math>\mathrm{E}g = \texttt{((} u + \mathrm{d}u \texttt{,~} v + \mathrm{d}v \texttt{))}\!</math> over <math>[u, v]\!</math> to produce the expression: |

| − | {| align="center" cellpadding="8" width="90%" | + | {| align="center" cellpadding="8" width="90%" |

| − | | <math>\ | + | | |

| + | <math>\begin{matrix} | ||

| + | uv \cdot \texttt{((} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{))} & + & | ||

| + | u \texttt{(} v \texttt{)} \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} & + & | ||

| + | \texttt{(} u \texttt{)} v \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} & + & | ||

| + | \texttt{(} u \texttt{)(} v \texttt{)} \cdot \texttt{((} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{))} | ||

| + | \end{matrix}</math> | ||

|} | |} | ||

| − | <math>\mathrm{E}g</math> tells you what you would have to do, from | + | In general, <math>\mathrm{E}g\!</math> tells you what you would have to do, from wherever you are in the universe <math>[u, v],\!</math> if you want to end up in a place where <math>g\!</math> is true. In this case, where the prevailing proposition <math>g\!</math> is <math>\texttt{((} u \texttt{,~} v \texttt{))},\!</math> the component <math>uv \cdot \texttt{((} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{))}\!</math> of <math>\mathrm{E}g\!</math> tells you this: If <math>u\!</math> and <math>v\!</math> are both true where you are, then change either both or neither of <math>u\!</math> and <math>v\!</math> at the same time, and you will attain a place where <math>\texttt{((} u \texttt{,~} v \texttt{))}\!</math> is true. |

| − | Figure 2.3 shows the expansion of <math>\mathrm{D}g</math> over <math>[u, v]\!</math> to produce the expression: | + | Figure 2.3 shows the expansion of <math>\mathrm{D}g\!</math> over <math>[u, v]\!</math> to produce the expression: |

| − | {| align="center" cellpadding="8" width="90%" | + | {| align="center" cellpadding="8" width="90%" |

| − | | <math>\ | + | | |

| + | <math>\begin{matrix} | ||

| + | uv \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} & + & | ||

| + | u \texttt{(} v \texttt{)} \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} & + & | ||

| + | \texttt{(} u \texttt{)} v \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} & + & | ||

| + | \texttt{(} u \texttt{)(} v \texttt{)} \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} | ||

| + | \end{matrix}</math> | ||

|} | |} | ||

| − | <math>\mathrm{D}g</math> tells you what you would have to do, from | + | In general, <math>\mathrm{D}g\!</math> tells you what you would have to do, from wherever you are in the universe <math>[u, v],\!</math> if you want to bring about a change in the value of <math>g,\!</math> that is, if you want to get to a place where the value of <math>g\!</math> is different from what it is where you are. In the present case, where the ruling proposition <math>g\!</math> is <math>\texttt{((} u \texttt{,~} v \texttt{))},\!</math> the term <math>uv \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)}\!</math> of <math>\mathrm{D}g\!</math> tells you this: If <math>u\!</math> and <math>v\!</math> are both true where you are, then you would have to change one or the other but not both <math>u\!</math> and <math>v\!</math> in order to reach a place where the value of <math>g\!</math> is different from what it is where you are. |

| − | Figure 2.4 approximates <math>\mathrm{D}g</math> by the linear form <math>\mathrm{d}g</math> that expands over <math>[u, v]\!</math> as follows: | + | Figure 2.4 approximates <math>\mathrm{D}g\!</math> by the linear form <math>{\mathrm{d}g}\!</math> that expands over <math>[u, v]\!</math> as follows: |

{| align="center" cellpadding="8" width="90%" | {| align="center" cellpadding="8" width="90%" | ||

| | | | ||

| − | <math>\begin{array}{ | + | <math>\begin{array}{*{9}{l}} |

\mathrm{d}g | \mathrm{d}g | ||

| − | & = & \texttt{ | + | & = & uv \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} |

| − | \\ | + | & + & u \texttt{(} v \texttt{)} \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} |

| − | & = & \texttt{( | + | & + & \texttt{(} u \texttt{)} v \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} |

| + | & + & \texttt{(} u \texttt{)(} v \texttt{)} \cdot \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} | ||

| + | \\[8pt] | ||

| + | & = & \texttt{(} \mathrm{d}u \texttt{,~} \mathrm{d}v \texttt{)} | ||

\end{array}</math> | \end{array}</math> | ||

|} | |} | ||

| − | Figure 2.5 shows what remains of the difference map <math>\mathrm{D}g</math> when the first order linear contribution <math>\mathrm{d}g</math> is removed, namely: | + | Figure 2.5 shows what remains of the difference map <math>\mathrm{D}g\!</math> when the first order linear contribution <math>{\mathrm{d}g}\!</math> is removed, namely: |

{| align="center" cellpadding="8" width="90%" | {| align="center" cellpadding="8" width="90%" | ||

| | | | ||

| − | <math>\begin{ | + | <math>\begin{array}{*{9}{l}} |

\mathrm{r}g | \mathrm{r}g | ||

| − | & = & | + | & = & uv \cdot 0 |

| − | \\ | + | & + & u \texttt{(} v \texttt{)} \cdot 0 |

| − | & = & | + | & + & \texttt{(} u \texttt{)} v \cdot 0 |

| − | \end{ | + | & + & \texttt{(} u \texttt{)(} v \texttt{)} \cdot 0 |

| + | \\[8pt] | ||

| + | & = & 0 | ||

| + | \end{array}</math> | ||

|} | |} | ||

| Line 1,711: | Line 1,732: | ||

</pre> | </pre> | ||

|} | |} | ||

| − | |||

| − | |||

{| align="center" border="0" cellpadding="10" | {| align="center" border="0" cellpadding="10" | ||

| Line 1,757: | Line 1,776: | ||

</pre> | </pre> | ||

|} | |} | ||

| − | |||

| − | |||

{| align="center" border="0" cellpadding="10" | {| align="center" border="0" cellpadding="10" | ||

| Line 1,803: | Line 1,820: | ||

</pre> | </pre> | ||

|} | |} | ||

| − | |||

| − | |||

{| align="center" border="0" cellpadding="10" | {| align="center" border="0" cellpadding="10" | ||

| Line 1,849: | Line 1,864: | ||

</pre> | </pre> | ||

|} | |} | ||

| − | |||

| − | |||

{| align="center" border="0" cellpadding="10" | {| align="center" border="0" cellpadding="10" | ||

Revision as of 17:35, 28 September 2013

Author: Jon Awbrey

The task ahead is to chart a course from general ideas about transformational equivalence classes of graphs to a notion of differential analytic turing automata (DATA). It may be a while before we get within sight of that goal, but it will provide a better measure of motivation to name the thread after the envisioned end rather than the more homely starting place.

The basic idea is as follows. One has a set \(\mathcal{G}\) of graphs and a set \(\mathcal{T}\) of transformation rules, and each rule \(\mathrm{t} \in \mathcal{T}\) has the effect of transforming graphs into graphs, \(\mathrm{t} : \mathcal{G} \to \mathcal{G}.\) In the cases that we shall be studying, this set of transformation rules partitions the set of graphs into transformational equivalence classes (TECs).

There are many interesting excursions to be had here, but I will focus mainly on logical applications, and and so the TECs I talk about will almost always have the character of logical equivalence classes (LECs).

An example that will figure heavily in the sequel is given by rooted trees as the species of graphs and a pair of equational transformation rules that derive from the graphical calculi of C.S. Peirce, as revived and extended by George Spencer Brown.

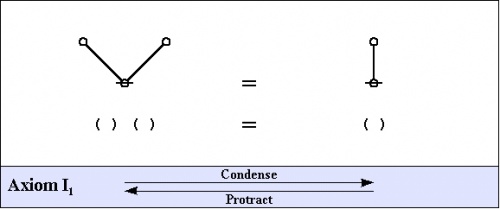

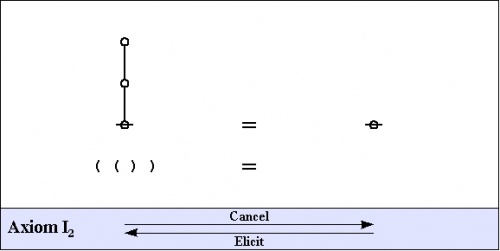

Here are the fundamental transformation rules, also referred to as the arithmetic axioms, more precisely, the arithmetic initials.

|

(1) |

|

(2) |

That should be enough to get started.

Cactus Language

I will be making use of the cactus language extension of Peirce's Alpha Graphs, so called because it uses a species of graphs that are usually called "cacti" in graph theory. The last exposition of the cactus syntax that I've written can be found here:

The representational and computational efficiency of the cactus language for the tasks that are usually associated with boolean algebra and propositional calculus makes it possible to entertain a further extension, to what we may call differential logic, because it develops this basic level of logic in the same way that differential calculus augments analytic geometry to handle change and diversity. There are several different introductions to differential logic that I have written and distributed across the Internet. You might start with the following couple of treatments:

I will draw on those previously advertised resources of notation and theory as needed, but right now I sense the need for some concrete examples.

Example 1

Let's say we have a system that is known by the name of its state space \(X\!\) and we have a boolean state variable \(x : X \to \mathbb{B},\!\) where \(\mathbb{B} = \{ 0, 1 \}.\!\)

We observe \(X\!\) for a while, relative to a discrete time frame, and we write down the following sequence of values for \(x.\!\)

|

\(\begin{array}{c|c} t & x \'"`UNIQ-MathJax1-QINU`"' in other words'"`UNIQ-MathJax2-QINU`"' =='"`UNIQ--h-10--QINU`"'Notions of Approximation== {| cellpadding="2" cellspacing="2" width="100%" | width="60%" | | width="40%" | for equalities are so weighed<br> that curiosity in neither can<br> make choice of either's moiety. |- | height="50px" | | valign="top" | — ''King Lear'', Sc.1.5–7 (Quarto) |- | | for qualities are so weighed<br> that curiosity in neither can<br> make choice of either's moiety.<br> |- | height="50px" | | valign="top" | — ''King Lear'', 1.1.5–6 (Folio) |} Justifying a notion of approximation is a little more involved in general, and especially in these discrete logical spaces, than it would be expedient for people in a hurry to tangle with right now. I will just say that there are ''naive'' or ''obvious'' notions and there are ''sophisticated'' or ''subtle'' notions that we might choose among. The later would engage us in trying to construct proper logical analogues of Lie derivatives, and so let's save that for when we have become subtle or sophisticated or both. Against or toward that day, as you wish, let's begin with an option in plain view. Figure 1.4 illustrates one way of ranging over the cells of the underlying universe \(U^\bullet = [u, v]\!\) and selecting at each cell the linear proposition in \(\mathrm{d}U^\bullet = [\mathrm{d}u, \mathrm{d}v]\!\) that best approximates the patch of the difference map \({\mathrm{D}f}\!\) that is located there, yielding the following formula for the differential \(\mathrm{d}f.\!\)

Figure 2.4 illustrates one way of ranging over the cells of the underlying universe \(U^\bullet = [u, v]\!\) and selecting at each cell the linear proposition in \(\mathrm{d}U^\bullet = [du, dv]\!\) that best approximates the patch of the difference map \(\mathrm{D}g\!\) that is located there, yielding the following formula for the differential \(\mathrm{d}g.\!\)

Well, \(g,\!\) that was easy, seeing as how \(\mathrm{D}g\!\) is already linear at each locus, \(\mathrm{d}g = \mathrm{D}g.\!\) Analytic SeriesWe have been conducting the differential analysis of the logical transformation \(F : [u, v] \mapsto [u, v]\!\) defined as \(F : (u, v) \mapsto ( ~ \texttt{((} u \texttt{)(} v \texttt{))} ~,~ \texttt{((} u \texttt{,~} v \texttt{))} ~ ),\!\) and this means starting with the extended transformation \(\mathrm{E}F : [u, v, \mathrm{d}u, \mathrm{d}v] \to [u, v, \mathrm{d}u, \mathrm{d}v]\!\) and breaking it into an analytic series, \(\mathrm{E}F = F + \mathrm{d}F + \mathrm{d}^2 F + \ldots,\!\) and so on until there is nothing left to analyze any further. As a general rule, one proceeds by way of the following stages:

In our analysis of the transformation \(F,\!\) we carried out Step 1 in the more familiar form \(\mathrm{E}F = F + \mathrm{D}F\!\) and we have just reached Step 2 in the form \(\mathrm{D}F = \mathrm{d}F + \mathrm{r}F,\!\) where \(\mathrm{r}F\!\) is the residual term that remains for us to examine next. Note. I'm am trying to give quick overview here, and this forces me to omit many picky details. The picky reader may wish to consult the more detailed presentation of this material at the following locations: Let's push on with the analysis of the transformation:

For ease of comparison and computation, I will collect the Figures that we need for the remainder of the work together on one page. Computation Summary for Logical DisjunctionFigure 1.1 shows the expansion of \(f = \texttt{((} u \texttt{)(} v \texttt{))}\!\) over \([u, v]\!\) to produce the expression:

Figure 1.2 shows the expansion of \(\mathrm{E}f = \texttt{((} u + \mathrm{d}u \texttt{)(} v + \mathrm{d}v \texttt{))}\!\) over \([u, v]\!\) to produce the expression:

In general, \(\mathrm{E}f\!\) tells you what you would have to do, from wherever you are in the universe \([u, v],\!\) if you want to end up in a place where \(f\!\) is true. In this case, where the prevailing proposition \(f\!\) is \(\texttt{((} u \texttt{)(} v \texttt{))},\!\) the indication \(uv \cdot \texttt{(} \mathrm{d}u ~ \mathrm{d}v \texttt{)}\!\) of \(\mathrm{E}f\!\) tells you this: If \(u\!\) and \(v\!\) are both true where you are, then just don't change both \(u\!\) and \(v\!\) at once, and you will end up in a place where \(\texttt{((} u \texttt{)(} v \texttt{))}\!\) is true. Figure 1.3 shows the expansion of \(\mathrm{D}f\) over \([u, v]\!\) to produce the expression:

In general, \({\mathrm{D}f}\!\) tells you what you would have to do, from wherever you are in the universe \([u, v],\!\) if you want to bring about a change in the value of \(f,\!\) that is, if you want to get to a place where the value of \(f\!\) is different from what it is where you are. In the present case, where the reigning proposition \(f\!\) is \(\texttt{((} u \texttt{)(} v \texttt{))},\!\) the term \(uv \cdot \mathrm{d}u ~ \mathrm{d}v\!\) of \({\mathrm{D}f}\!\) tells you this: If \(u\!\) and \(v\!\) are both true where you are, then you would have to change both \(u\!\) and \(v\!\) in order to reach a place where the value of \(f\!\) is different from what it is where you are. Figure 1.4 approximates \({\mathrm{D}f}\!\) by the linear form \(\mathrm{d}f\!\) that expands over \([u, v]\!\) as follows:

A more fine combing of the second Table brings to mind a rule that partly covers the remaining cases, that is, \(\texttt{du~=~v}, ~\texttt{dv~=~(u)}.\) This much information about Orbit 2 is also encapsulated by the extended proposition, \(\texttt{(uv)((du, v))(dv, u)},\) which says that \(u\!\) and \(v\!\) are not both true at the same time, while \(du\!\) is equal in value to \(v\!\) and \(dv\!\) is opposite in value to \(u.\!\) Turing Machine ExampleBy way of providing a simple illustration of Cook's Theorem, namely, that “Propositional Satisfiability is NP-Complete”, I will describe one way to translate finite approximations of turing machines into propositional expressions, using the cactus language syntax for propositional calculus that I will describe in more detail as we proceed.

I will follow the pattern of discussion in Herbert Wilf (1986), Algorithms and Complexity, pp. 188–201, but translate his logical formalism into cactus language, which is more efficient in regard to the number of propositional clauses that are required. A turing machine for computing the parity of a bit string is described by means of the following Figure and Table.

The TM has a finite automaton (FA) as one component. Let us refer to this particular FA by the name of \(\mathrm{M}.\) The tape head (that is, the read unit) will be called \(\mathrm{H}.\) The registers are also called tape cells or tape squares. Finite ApproximationsTo see how each finite approximation to a given turing machine can be given a purely propositional description, one fixes the parameter \(k\!\) and limits the rest of the discussion to describing \(\mathrm{Stilt}(k),\!\) which is not really a full-fledged TM anymore but just a finite automaton in disguise. In this example, for the sake of a minimal illustration, we choose \(k = 2,\!\) and discuss \(\mathrm{Stunt}(2).\) Since the zeroth tape cell and the last tape cell are both occupied by the character \(^{\backprime\backprime}\texttt{\#}^{\prime\prime}\) that is used for both the beginning of file \((\mathrm{bof})\) and the end of file \((\mathrm{eof})\) markers, this allows for only one digit of significant computation. To translate \(\mathrm{Stunt}(2)\) into propositional form we use the following collection of basic propositions, boolean variables, or logical features, depending on what one prefers to call them: The basic propositions for describing the present state function \(\mathrm{QF} : P \to Q\) are these:

The proposition of the form \(\texttt{pi\_qj}\) says:

The basic propositions for describing the present register function \(\mathrm{RF} : P \to R\) are these:

The proposition of the form \(\texttt{pi\_rj}\) says:

The basic propositions for describing the present symbol function \(\mathrm{SF} : P \to (R \to S)\) are these:

The proposition of the form \(\texttt{pi\_rj\_sk}\) says:

Initial ConditionsGiven but a single free square on the tape, there are just two different sets of initial conditions for \(\mathrm{Stunt}(2),\) the finite approximation to the parity turing machine that we are presently considering. Initial Conditions for Tape Input "0"The following conjunction of 5 basic propositions describes the initial conditions when \(\mathrm{Stunt}(2)\) is started with an input of "0" in its free square:

This conjunction of basic propositions may be read as follows:

Initial Conditions for Tape Input "1"The following conjunction of 5 basic propositions describes the initial conditions when \(\mathrm{Stunt}(2)\) is started with an input of "1" in its free square:

This conjunction of basic propositions may be read as follows:

Propositional ProgramA complete description of \(\mathrm{Stunt}(2)\) in propositional form is obtained by conjoining one of the above choices for initial conditions with all of the following sets of propositions, that serve in effect as a simple type of declarative program, telling us all that we need to know about the anatomy and behavior of the truncated TM in question. Mediate Conditions

Terminal Conditions

State Partition

Register Partition

Symbol Partition

Interaction Conditions

Transition Relations

Interpretation of the Propositional ProgramLet us now run through the propositional specification of \(\mathrm{Stunt}(2),\) our truncated TM, and paraphrase what it says in ordinary language. Mediate Conditions

In the interpretation of the cactus language for propositional logic that we are using here, an expression of the form \(\texttt{(p(q))}\) expresses a conditional, an implication, or an if-then proposition, commonly read in one of the following ways:

A text string expression of the form \(\texttt{(p(q))}\) corresponds to a graph-theoretic data-structure of the following form:

Taken together, the Mediate Conditions state the following:

Terminal Conditions

In cactus syntax, an expression of the form \(\texttt{((p)(q))}\) expresses the disjunction \(p ~\mathrm{or}~ q.\) The corresponding cactus graph, here just a tree, has the following shape:

In effect, the Terminal Conditions state the following:

State Partition

In cactus syntax, an expression of the form \(\texttt{((} e_1 \texttt{),(} e_2 \texttt{),(} \ldots \texttt{),(} e_k \texttt{))}\!\) expresses a statement to the effect that exactly one of the expressions \(e_j\!\) is true, for \(j = 1 ~\mathit{to}~ k.\) Expressions of this form are called universal partition expressions, and the corresponding painted and rooted cactus (PARC) has the following shape:

The State Partition segment of the propositional program consists of three universal partition expressions, taken in conjunction expressing the condition that \(\mathrm{M}\) has to be in one and only one of its states at each point in time under consideration. In short, we have the constraint:

Register Partition

The Register Partition segment of the propositional program consists of three universal partition expressions, taken in conjunction saying that the read head \(\mathrm{H}\) must be reading one and only one of the registers or tape cells available to it at each of the points in time under consideration. In sum:

Symbol Partition

The Symbol Partition segment of the propositional program for \(\mathrm{Stunt}(2)\) consists of nine universal partition expressions, taken in conjunction stipulating that there has to be one and only one symbol in each of the registers at each point in time under consideration. In short, we have:

Interaction Conditions

In briefest terms, the Interaction Conditions simply express the circumstance that the mark on a tape cell cannot change between two points in time unless the tape head is over the cell in question at the initial one of those points in time. All that we have to do is to see how they manage to say this. Consider a cactus expression of the following form:

This expression has the corresponding cactus graph:

A propositional expression of this form can be read as follows:

The eighteen clauses of the Interaction Conditions simply impose one such constraint on symbol changes for each combination of the times \(p_0, p_1,\!\) registers \(r_0, r_1, r_2,\!\) and symbols \(s_0, s_1, s_\#.\!\) Transition Relations

The Transition Relation segment of the propositional program for \(\mathrm{Stunt}(2)\) consists of sixteen implication statements with complex antecedents and consequents. Taken together, these give propositional expression to the TM Figure and Table that were given at the outset. Just by way of a single example, consider the clause:

This complex implication statement can be read to say:

ComputationThe propositional program for \(\mathrm{Stunt}(2)\) uses the following set of \(9 + 12 + 36 = 57\!\) basic propositions or boolean variables:

This means that the propositional program itself is nothing but a single proposition or boolean function of the form \(p : \mathbb{B}^{57} \to \mathbb{B}.\) An assignment of boolean values to the above set of boolean variables is called an interpretation of the proposition \(p,\!\) and any interpretation of \(p\!\) that makes the proposition \(p : \mathbb{B}^{57} \to \mathbb{B}\) evaluate to \(1\!\) is referred to as a satisfying interpretation of the proposition \(p.\!\) Another way to specify interpretations, instead of giving them as bit vectors in \(\mathbb{B}^{57}\) and trying to remember some arbitrary ordering of variables, is to give them in the form of singular propositions, that is, a conjunction of the form \(e_1 \cdot \ldots \cdot e_{57}\) where each \(e_j\!\) is either \(v_j\!\) or \(\texttt{(} v_j \texttt{)},\) that is, either the assertion or the negation of the boolean variable \({v_j},\!\) as \(j\!\) runs from 1 to 57. Even more briefly, the same information can be communicated simply by giving the conjunction of the asserted variables, with the understanding that each of the others is negated. A satisfying interpretation of the proposition \(p\!\) supplies us with all the information of a complete execution history for the corresponding program, and so all we have to do in order to get the output of the program \(p\!\) is to read off the proper part of the data from the expression of this interpretation. OutputOne component of the \(\begin{smallmatrix}\mathrm{Theme~One}\end{smallmatrix}\) program that I wrote some years ago finds all the satisfying interpretations of propositions expressed in cactus syntax. It's not a polynomial time algorithm, as you may guess, but it was just barely efficient enough to do this example in the 500 K of spare memory that I had on an old 286 PC in about 1989, so I will give you the actual outputs from those trials. Output Conditions for Tape Input "0"Let \(p_0\!\) be the proposition that we get by conjoining the proposition that describes the initial conditions for tape input "0" with the proposition that describes the truncated turing machine \(\mathrm{Stunt}(2).\) As it turns out, \(p_0\!\) has a single satisfying interpretation. This interpretation is expressible in the form of a singular proposition, which can in turn be indicated by its positive logical features, as shown in the following display:

The Output Conditions for Tape Input "0" can be read as follows:

The output of \(\mathrm{Stunt}(2)\) being the symbol that rests under the tape head \(\mathrm{H}\) if and when the machine \(\mathrm{M}\) reaches one of its resting states, we get the result that \(\mathrm{Parity}(0) = 0.\) Output Conditions for Tape Input "1"Let \(p_1\!\) be the proposition that we get by conjoining the proposition that describes the initial conditions for tape input "1" with the proposition that describes the truncated turing machine \(\mathrm{Stunt}(2).\) As it turns out, \(p_1\!\) has a single satisfying interpretation. This interpretation is expressible in the form of a singular proposition, which can in turn be indicated by its positive logical features, as shown in the following display:

The Output Conditions for Tape Input "1" can be read as follows:

The output of \(\mathrm{Stunt}(2)\) being the symbol that rests under the tape head \(\mathrm{H}\) when and if the machine \(\mathrm{M}\) reaches one of its resting states, we get the result that \(\mathrm{Parity}(1) = 1.\) Work AreaDATA 20. http://forum.wolframscience.com/showthread.php?postid=791#post791 Let's see how this information about the transformation F, arrived at by eyeballing the raw data, comports with what we derived through a more systematic symbolic computation. The results of the various operator actions that we have just computed are summarized in Tables 66-i and 66-ii from my paper, and I have attached these as a text file below. Table 66-i. Computation Summary for f<u, v> = ((u)(v)) o--------------------------------------------------------------------------------o | | | !e!f = uv. 1 + u(v). 1 + (u)v. 1 + (u)(v). 0 | | | | Ef = uv. (du dv) + u(v). (du (dv)) + (u)v.((du) dv) + (u)(v).((du)(dv)) | | | | Df = uv. du dv + u(v). du (dv) + (u)v. (du) dv + (u)(v).((du)(dv)) | | | | df = uv. 0 + u(v). du + (u)v. dv + (u)(v). (du, dv) | | | | rf = uv. du dv + u(v). du dv + (u)v. du dv + (u)(v). du dv | | | o--------------------------------------------------------------------------------o Table 66-ii. Computation Summary for g<u, v> = ((u, v)) o--------------------------------------------------------------------------------o | | | !e!g = uv. 1 + u(v). 0 + (u)v. 0 + (u)(v). 1 | | | | Eg = uv.((du, dv)) + u(v). (du, dv) + (u)v. (du, dv) + (u)(v).((du, dv)) | | | | Dg = uv. (du, dv) + u(v). (du, dv) + (u)v. (du, dv) + (u)(v). (du, dv) | | | | dg = uv. (du, dv) + u(v). (du, dv) + (u)v. (du, dv) + (u)(v). (du, dv) | | | | rg = uv. 0 + u(v). 0 + (u)v. 0 + (u)(v). 0 | | | o--------------------------------------------------------------------------------o o---------------------------------------o | | | o | | / \ | | / \ | | / \ | | o o | | / \ / \ | | / \ / \ | | / \ / \ | | o o o | | / \ / \ / \ | | / \ / \ / \ | | / \ / \ / \ | | o o o o | | / \ / \ / \ / \ | | / \ / \ / \ / \ | | / \ / \ / \ / \ | | o o o o o | | |\ / \ / \ / \ /| | | | \ / \ / \ / \ / | | | | \ / \ / \ / \ / | | | | o o o o | | | | |\ / \ / \ /| | | | | | \ / \ / \ / | | | | | u | \ / \ / \ / | v | | | o---+---o o o---+---o | | | \ / \ / | | | | \ / \ / | | | | du \ / \ / dv | | | o-------o o-------o | | \ / | | \ / | | \ / | | o | | | o---------------------------------------o DiscussionPD = Philip Dutton

PD: I've been watching your posts.

PD: I am not an expert on logic infrastructures but I find the posts

interesting (despite not understanding much of it). I am like

the diagrams. I have recently been trying to understand CA's

using a particular perspective: sinks and sources. I think

that all CA's are simply combinations of sinks and sources.

How they interact (or intrude into each other's domains)

would most likely be a result of the rules (and initial

configuration of on or off cells).

PD: Anyway, to be short, I "see" diamond shapes quite often in

your diagrams. Triangles (either up or down) or diamonds

(combination of an up and down triangle) make me think

soley of sinks and sources. I think of the diamond to

be a source which, during the course of progression,

is expanding (because it is producing) and then starts

to act as a sink (because it consumes) -- and hence the

diamond. I can't stop thinking about sinks and sources in

CA's and so I thought I would ask you if there is some way

to tie the two worlds together (CA's of sinks and sources

together with your differential constructs).

PD: Any thoughts?

Yes, I'm hoping that there's a lot of stuff analogous to

R-world dynamics to be discovered in this B-world variety,

indeed, that's kind of why I set out on this investigation --

oh, gee, has it been that long? -- I guess about 1989 or so,

when I started to see this "differential logic" angle on what

I had previously studied in systems theory as the "qualitative

approach to differential equations". I think we used to use the

words "attractor" and "basin" more often than "sink", but a source

is still a source as time goes by, and I do remember using the word

"sink" a lot when I was a freshperson in physics, before I got logic.

I have spent the last 15 years doing a funny mix of practice in stats

and theory in math, but I did read early works by Von Neumann, Burks,

Ulam, and later stuff by Holland on CA's. Still, it may be a while

before I have re-heated my concrete intuitions about them in the

NKS way of thinking.

There are some fractal-looking pictures that emerge when

I turn to "higher order propositional expressions" (HOPE's).

I have discussed this topic elswhere on the web and can look

it up now if your are interested, but I am trying to make my

e-positions somewhat clearer for the NKS forum than I have

tried to do before.

But do not hestitate to dialogue all this stuff on the boards,

as that's what always seems to work the best. What I've found

works best for me, as I can hardly remember what I was writing

last month without Google, is to archive a copy at one of the

other Google-visible discussion lists that I'm on at present.

Document HistoryOntology List : Feb–Mar 2004

NKS Forum : Feb–Jun 2004

Inquiry List : Feb–Jun 2004

|